Interacting with Large Language Models (LLMs) offers incredible potential, but crafting effective prompts often feels like navigating a complex maze. While prompt design and engineering are accessible ways to guide LLMs, achieving consistently high-quality output requires skill and iteration. This process, known as AI prompt optimization, is crucial for unlocking the full power of generative AI.

Manually refining prompts can be demanding. It’s a relatively new discipline with evolving techniques. Finding the perfect combination of instructions and examples for one model doesn’t guarantee success with another, leading to significant effort in migrating or adapting prompts. This challenge can result in “prompt fatigue” for those building LLM-based applications. Understanding and applying AI prompt optimization techniques, including leveraging specialized tools, can significantly streamline this process, saving time and enhancing the performance of your AI solutions. This article explores the fundamentals of AI prompt optimization, why it’s essential, and how automated tools are changing the game.

Understanding the Challenge: Why Optimize AI Prompts?

Getting the best results from an LLM isn’t always straightforward. Several factors make AI prompt optimization a necessary step:

- LLM Sensitivity: Models can be highly sensitive to the wording, structure, and nuances of a prompt. Minor changes can lead to vastly different outputs.

- Complexity of Tasks: For complex tasks, precisely conveying the desired context, constraints, and output format requires careful prompt construction.

- Model Variability: A prompt that works well with one LLM might perform poorly with another due to differences in training data, architecture, or fine-tuning. Optimization helps tailor prompts to specific models.

- Consistency and Reliability: Optimized prompts lead to more predictable and reliable outputs, which is critical for production-level AI applications.

- Efficiency: Poorly constructed prompts can waste computational resources and time, requiring multiple attempts to get a usable result. Optimization aims for effective results on the first try.

- Achieving Specific Outcomes: Whether aiming for a particular tone, style, format, or level of detail, optimization helps align the LLM’s output with specific requirements.

Addressing these challenges through systematic AI prompt optimization is key to developing robust and effective generative AI applications.

Core Concepts in AI Prompt Optimization

Effective optimization hinges on understanding the key components of a prompt and how they influence LLM behavior:

- Instructions: This is the core guidance provided to the LLM. It typically includes:

- System Instruction: High-level directives about the AI’s persona, role, or general behavior.

- Context: Background information necessary for the LLM to understand the specific situation or query.

- Task: The specific action or question the LLM needs to address.

- Demonstrations (Few-Shot Examples): Providing examples of desired input-output pairs helps the LLM understand the expected format, style, tone, or reasoning process. This is particularly useful for complex or nuanced tasks.

- Labeled Data: For automated optimization techniques, a set of labeled examples (input query and corresponding ideal output or ground truth) is often required. This data allows optimization tools to evaluate the performance of different prompt variations.

Mastering the interplay between clear instructions and relevant demonstrations is central to successful AI prompt optimization.

Manual vs. Automated AI Prompt Optimization

Optimizing prompts can be approached manually or through automated methods:

- Manual Techniques: This involves iteratively refining prompts based on trial and error. Techniques include rephrasing instructions, adjusting the level of detail, experimenting with different few-shot examples, and A/B testing prompt variations. While intuitive, manual optimization can be time-consuming, requires significant expertise, and doesn’t scale well, especially when dealing with multiple models or complex tasks.

- Automated Techniques: This approach leverages algorithms and other models to systematically discover optimal prompt structures. Automated AI prompt optimization tools can explore a vast space of potential prompt variations (both instructions and demonstrations) much faster than manual methods. They typically use evaluation metrics to score candidate prompts against a dataset, progressively refining the prompt towards better performance.

How Automated AI Prompt Optimization Tools Work: A Closer Look

Automated AI prompt optimization services are emerging as powerful solutions to streamline prompt engineering. They generally operate on an iterative refinement principle:

- Input: The user provides a baseline prompt template (often separated into instructions and task/context), a target LLM, a dataset of labeled examples (input/output pairs), and specifies evaluation metrics (e.g., accuracy, relevance, fluency, custom metrics).

- Optimization Engine: An “optimizer” model generates candidate prompt variations. This might involve rewriting instructions, selecting different combinations of few-shot examples from the provided dataset, or both.

- Evaluation Engine: An “evaluator” model (or a computational metric) assesses the performance of the target LLM using each candidate prompt against the labeled dataset based on the chosen metrics.

- Selection: The system identifies the prompt variation that yields the best performance according to the specified metrics.

- Iteration: This process often repeats for a set number of steps, allowing the optimizer to learn from evaluations and propose progressively better prompts.

This automated approach significantly reduces the manual effort and time required, enabling users to find high-performing prompts efficiently, even across different LLMs.

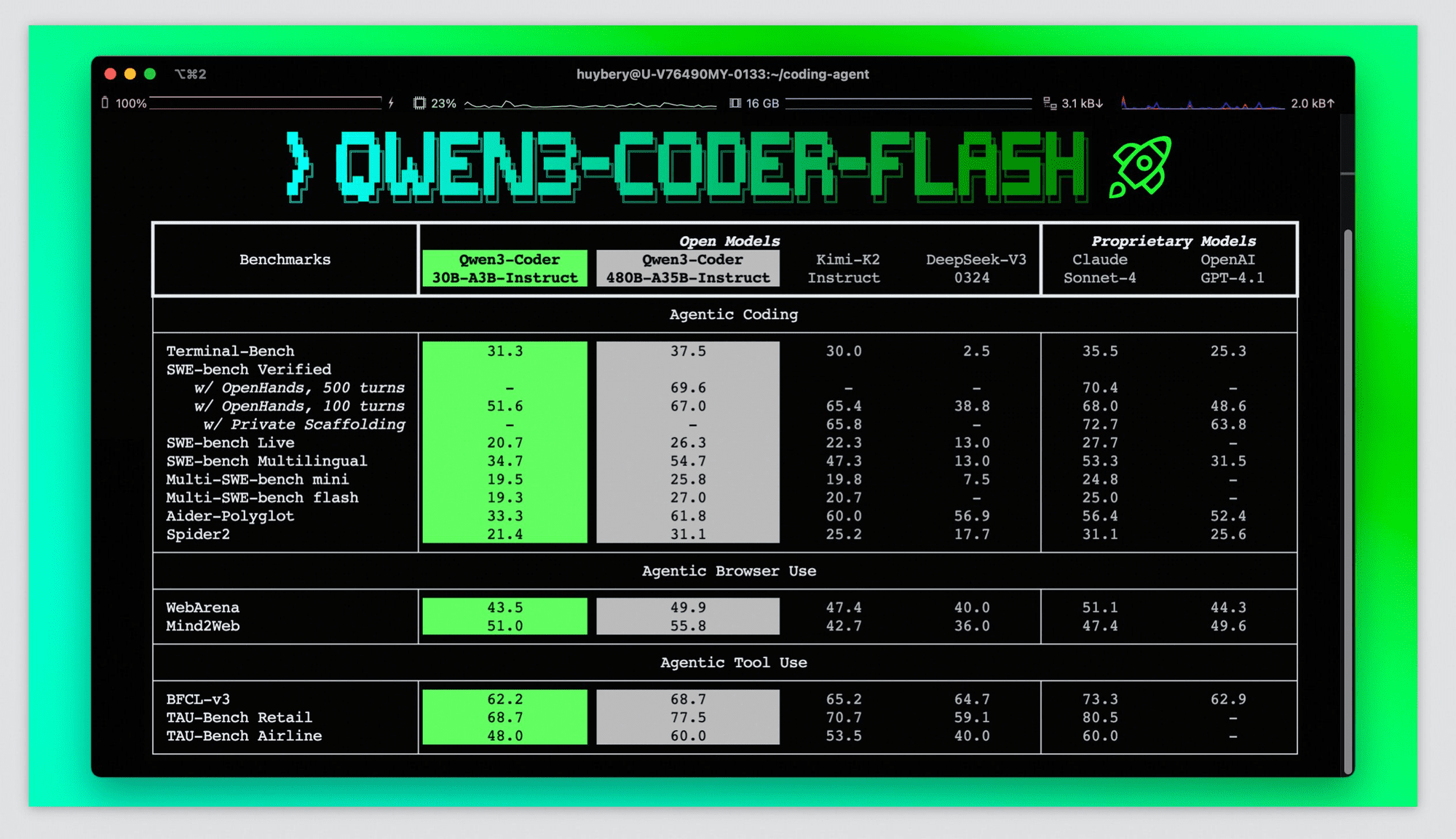

Example: AI Prompt Optimization with Vertex AI Prompt Optimizer

Google’s Vertex AI Prompt Optimizer provides a concrete example of an automated AI prompt optimization service. It helps users find the best combination of instructions and demonstrations for models available on Vertex AI, based on research into automatic prompt optimization (APO).

Imagine developing an AI cooking assistant. An initial prompt for answering healthy cooking questions might be simple:

Given a question with some context, provide the correct answer to the question. nQuestion: {{question}}nContext:{{context}}nAnswer: {{target}}

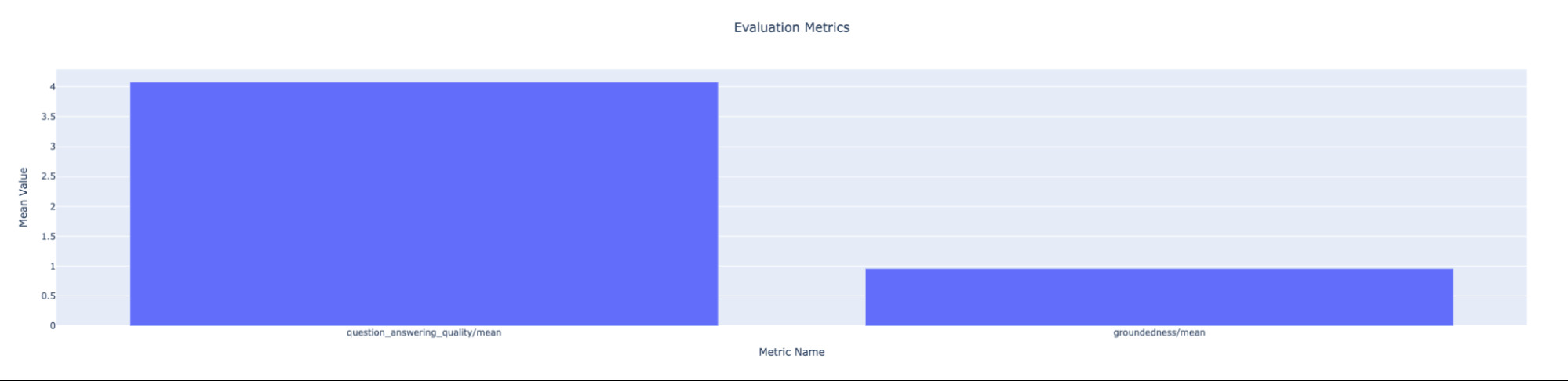

While this might produce decent results, evaluation using metrics like question-answering quality and groundedness might show room for improvement, especially if migrating to a different model like Gemini 1.5 Flash.

Graph showing initial evaluation metrics for an AI cooking assistant, with question_answering_quality/mean significantly higher than groundedness/mean.

Graph showing initial evaluation metrics for an AI cooking assistant, with question_answering_quality/mean significantly higher than groundedness/mean.

Using a tool like Vertex AI Prompt Optimizer involves these general steps:

- Prepare Template: Define the initial instruction and context/task templates.

- Upload Labeled Samples: Provide a dataset (CSV or JSONL) with input questions and desired “ground truth” answers in cloud storage.

- Configure Settings: Specify the target model (e.g., Gemini 1.5 Flash), evaluation metrics, optimization mode (instructions, demonstrations, or both), number of optimization steps, etc.

- Run Job: Execute the optimization process, often as a managed job (like a Vertex AI Training Custom Job).

- Get Optimized Prompt: The tool outputs the best-performing instruction template and/or set of demonstrations found during the optimization process.

Example report showing an optimized instruction discovered by Vertex AI Prompt Optimizer during a specific step.

Example report showing an optimized instruction discovered by Vertex AI Prompt Optimizer during a specific step.

The optimized prompt elements can then be integrated into the application.

Example of an improved answer generated by the AI cooking assistant using an optimized prompt from Vertex AI Prompt Optimizer.

Example of an improved answer generated by the AI cooking assistant using an optimized prompt from Vertex AI Prompt Optimizer.

Re-evaluating the LLM’s performance with the optimized prompt typically shows significant improvement in the targeted metrics, demonstrating the effectiveness of automated AI prompt optimization.

Graph showing improved evaluation metrics after using Vertex AI Prompt Optimizer, indicating a higher mean score for question_answering_quality.

Graph showing improved evaluation metrics after using Vertex AI Prompt Optimizer, indicating a higher mean score for question_answering_quality.

Conclusion

AI prompt optimization is evolving from a manual art into a more systematic discipline. While prompt engineering remains crucial for interacting with LLMs, the inherent challenges of sensitivity, variability, and the time investment required for manual tuning necessitate better approaches. Automated AI prompt optimization techniques and tools offer a powerful solution.

By systematically refining instructions and selecting optimal demonstrations based on performance metrics, these tools help create high-performing prompts tailored to specific models and tasks. This saves valuable time and effort, improves the quality and consistency of LLM outputs, and ultimately accelerates the development of robust generative AI applications. As LLMs continue to advance, mastering AI prompt optimization will be increasingly vital for harnessing their full potential. Explore the techniques and consider leveraging automated tools to enhance your own AI projects.